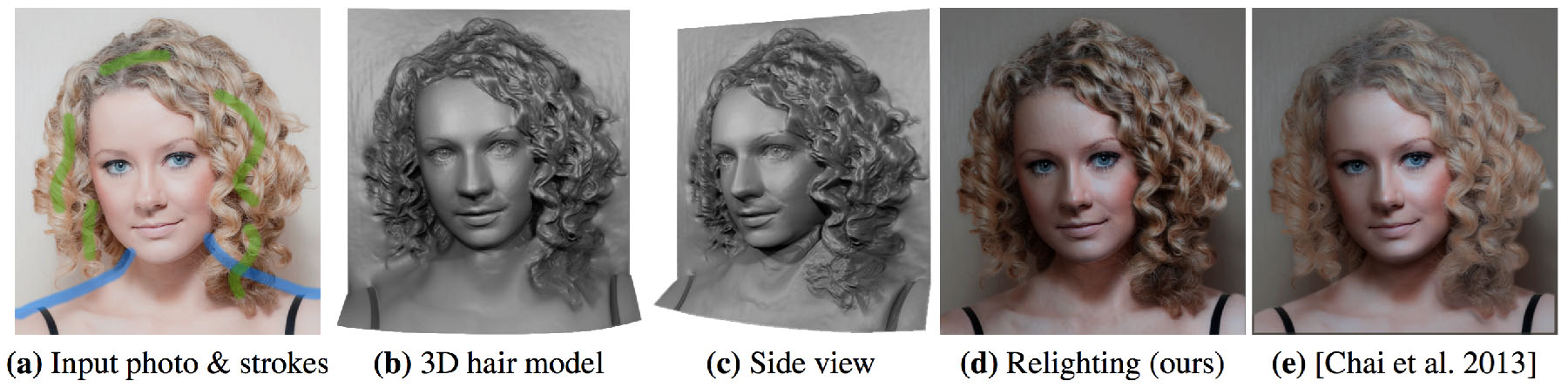

From a single photo and a few user strokes (a), our system reconstructs a 3D model that captures the intricate details of the hair (b) & (c). This model can be used for high-quality portrait relighting with more realistic shadowing effects (d) than the previous method (e). Original image courtesy of Ross Heale-Whittle.

We propose a novel system to reconstruct a high-quality hair depth map from a single portrait photo with minimal user input. We achieve this by combining depth cues such as occlusions, silhouettes, and shading, with a novel 3D helical structural prior for hair reconstruction. We fit a parametric morphable face model to the input photo and construct a base shape in the face, hair and body regions using occlusion and silhouette constraints. We then estimate the normals in the hair region via a Shape-from-Shading-based optimization that uses the lighting inferred from the face model and enforces an adaptive albedo prior that models the typical color and occlusion variations of hair. We introduce a 3D helical hair prior that captures the geometric structure of hair, and show that it can be robustly recovered from the input photo in an automatic manner. Our system combines the base shape, the normals estimated by Shape from Shading, and the 3D helical hair prior to reconstruct high-quality 3D hair models. Our single-image reconstruction closely matches the results of a state-of-the-art multi-view stereo applied on a multi-view stereo dataset. Our technique can reconstruct a wide variety of hairstyles ranging from short to long and from straight to messy, and we demonstrate the use of our 3D hair models for high-quality portrait relighting, novel view synthesis and 3D-printed portrait reliefs.